Realtime Raytracing - Part 1

Welcome to the Gimpy Software article on creating a GPU-powered Raytracer! (Part 1)

History

I've always been interested in computer graphics implementations. As a teenager in the 90s I used to watch 4Kb and 64Kb graphics demos and be amazed at the effects I saw, all being drawn in 'real time'. This prompted me to recreate some of the demo effects, and inspired my first experiments in 3D. In those days it meant hand-rolling my own assembly code to plot pixels, lines, polygons, etc - A very challenging, but rewarding learning experience!

As time moved on, and CPUs got more powerful, I developed an interest in ray tracing. I added a mental note onto my computing 'bucket list' of projects, but never seemed to have the time to implement my own.

Fast forward (quite!) a few years, and finally I achieved my goal - I wrote a simple ray tracer in C++. It gave good results, and the implementation was surprisingly simple. I was astounded at how little code was needed to achieve some visual output, and then again at how a few (relatively simple!) incremental tweaks to the ray tracing algorithm could result in significant improvements in the rendered scene.

I'm now going to revisit this project again, but this time using a GLSL pixel shader - Allowing REAL-TIME ray tracing - Something I couldn't event contemplate before. Instead of your CPU doing the image processing, a pixel shader allows your GPU to do it instead, and in a very ‘parallel’ fasion. To keep the development cycle as fast as possible I will use an online pixel shader editor:

http://pixelshaders.com/editor/

This compiles the code 'as you type' which makes seeing the results of your changes immediate - I totally recommend the site.

Raytracing

I don't want to go into too much information about how ray tracing works as there are many articles online which describe the processes much better than I can!

When I picture the rays in my head I always seem to think of 'pea shooters’. For each pixel in the 2D image of the scene I imagine firing a pellet from where I'm sitting in front of the monitor to the pixel. The 'pellet' (or 'ray') will travel through the 3D world until it 'hits' an object. If no hits occur the pixel is black, otherwise the color of the pixel is calculated using the properties of the object being hit. In the simplest case the pixel color is taken directly from the object - E.g. If the object is red, the pixel color will match.

More effects are added by considering two things:

1)Can the point at which the ray hits the object been 'seen' by a light source?

2)What happens to the ray after hitting the object? (I.e. How does it 'bounce'.)

(1) will let us implement per-pixel illumination and shadows.

(2) will let us implement reflections.

...all covered in later articles. In the last article we will have a real-time ray tracer which produces output like this:

I’ll try make sure the code comments are regular enough that the code is easy to follow and understand.

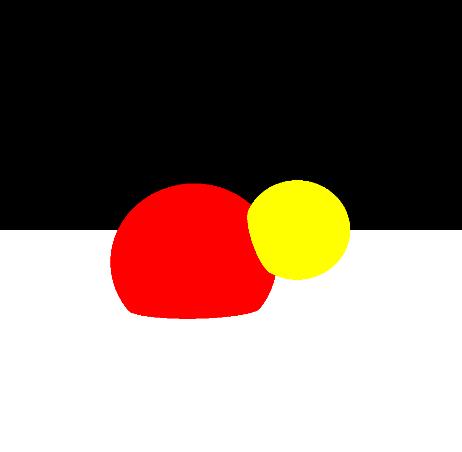

Our first iteration of the ray tracer will concentrate on firing rays into our scene, which will just contain a ‘floor’ plane and a couple of spheres. Defining these shapes is achieved using relatively simple maths. For each ray vector we perform a calculation to determine whether the vector intersects the object. If no ‘hit’ occurs, we just need to plat a black pixel. If one or more hits occurs we find the nearest object (I.e. the ‘hit’ achieved using the shorted ray vector), and use the properties of this object to color the pixel.

Here’s the code, which you can just cut and paste into the shader editor:

precision mediump float;

varying vec2 position; // Texture position being processed.

uniform float time; // The current time.

// Calculate whether a ray hits a sphere.

// If it hits, return the distance to the object.

float sphereHit(inout vec3 rayPos, inout vec3 rayDir, vec3 origin, float radius, inout vec4 rgb)

{

vec3 p = rayPos - origin;

// We need to solve a quadratic to find the distance.

float a = dot(rayDir, rayDir);

float b = 2.0 * dot(p, rayDir);

float c = dot(p, p) - (radius * radius);

if (a == 0.0)

return 999.0; // No hit.

float f = b * b - 4.0 * a * c;

if (f < 0.0)

return 999.0;

float lamda1 = (-b + sqrt(f)) / (2.0 * a);

float lamda2 = (-b - sqrt(f)) / (2.0 * a);

if (max(lamda1, lamda2) <= 0.0)

return 999.0;

// Find nearest hit point.

if (lamda1 <= 0.0)

lamda1 = lamda2;

else if (lamda2 <= 0.0)

lamda2 = lamda1;

float dist = min(lamda1, lamda2);

// Reflect ray off the surface.

rayPos = rayPos + dist * rayDir;

vec3 normal = normalize(rayPos - origin);

rayDir = reflect(rayDir, normal);

return dist;

}

// Calculate whether a ray hits the floor plane.

// If it hits, return the distance to the object.

float planeHit(inout vec3 rayPos, inout vec3 rayDir, float y, inout vec4 rgb)

{

if (rayDir.y == 0.0)

return 999.0; // Ray is parallel to the plane.

float lamda = (y - rayPos.y) / rayDir.y;

float dist = lamda * length(rayDir);

// Reflect ray off the surface.

rayPos = rayPos + dist * normalize(rayDir);

rayDir = reflect(rayDir, vec3(0.0, 1.0, 0.0));

return dist;

}

// Check for a collision between ray and object.

// If there is one, return the distance to the object.

float objectHit(int id, inout vec3 rayPos, inout vec3 rayDir, inout vec4 rgb)

{

if (id == 0)

{

// Object 0 - Red sphere.

rgb = vec4(1.0, 0.0, 0.0, 1.0);

return sphereHit(rayPos, rayDir, vec3(-0.3, -0.2, 0.0), 0.5, rgb);

}

if (id == 1)

{

// Object 1 - Yellow sphere.

rgb = vec4(1.0, 1.0, 0.0, 1.0);

return sphereHit(rayPos, rayDir, vec3(0.3, 0.0, -0.2), 0.3, rgb);

}

// Object 2 - The floor.

rgb = vec4(1.0);

return planeHit(rayPos, rayDir, -0.5, rgb);

}

// The bulk of the raytrace work is done here.

// Cast a ray through the scene and see if it hits an object.

vec4 castRay(inout vec3 inRayPos, inout vec3 inRayDir)

{

vec4 rgb = vec4(0.0); // Default color.

float d_nearest = 999.0; // Distance to the nearest hit object.

vec4 hit_rgb;

vec3 bouncedRayPos, bouncedRayDir;

vec3 testRayPos, testRayDir;

// Check for a collision with each of the objects in the scene.

for (int id = 0; id < 3; id++)

{

testRayPos = vec3(inRayPos); testRayDir = vec3(inRayDir);

float d = objectHit(id, testRayPos, testRayDir, hit_rgb);

// If there was a hit, and it occurred nearer than any other object...

if (d > 0.0 && d < d_nearest)

{

// ...remember it.

d_nearest = d;

rgb = hit_rgb;

bouncedRayPos = vec3(testRayPos); bouncedRayDir = vec3(testRayDir);

}

}

// If we hit something...

if (d_nearest > 0.0 && d_nearest < 999.0)

{

inRayPos = bouncedRayPos;

inRayDir = bouncedRayDir;

}

return rgb;

}

// The entry point to the shader.

void main() {

// Invert the texture Y coordinate so our scene renders the right way up.

vec2 p = vec2(position.x, 1.0 - position.y);

// Set the camera properties.

float cameraDist = 2.0;

// Define the position and directions of the 'ray' to fire.

vec3 rayPos = vec3(p.x - 0.5, 0.5 - p.y, -cameraDist);

vec3 rayDir = normalize(vec3(p.x - 0.5, 0.5 - p.y, 1.0));

// Fire the ray into the scene.

vec4 rgb = castRay(rayPos, rayDir);

if (rgb.a > 0.0)

{

// The ray hit an object!

}

else

{

// The ray missed all objects - Plot a black pixel.

rgb = vec4(0.0, 0.0, 0.0, 1.0);

}

gl_FragColor = rgb;

}

The code is documented, but the most import functions are:

• castRay - Cast a ray through the scene and see if it hits an object.

• objectHit - Check for a collision between ray and an object.

…and produces output which looks like this:

As you can see, we only have flat shading, and the colors are quite plain, but already the placement of the spheres shows an interesting curved intersection between themselves, and the floor.

In the next article we'll be extending our implementation to cover reflections and a simple animation. The former will add another level of realism, and the latter will help show the real-time nature of the rendering.